First of all, let’s define what we mean by “a soul.” For the purpose of this discussion, we’ll define a soul as something that goes beyond the physical body–a concept that conveys immortality. So if you got a soul, or you don’t, seems like a big deal according to our definition.

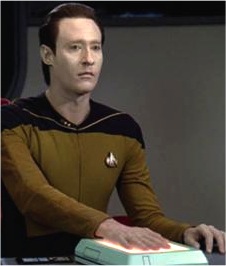

In 1989, Star Trek: The Next Generation aired an episode called, “The Measure of a Man.” In this episode, Commander Data, an android, is declared property of Starfleet, the military organization claiming, “it” (Data) does not have the same rights as “sentient” biological beings.

http://en.wikipedia.org/wiki/The_Measure_of_a_Man_(Star_Trek:_The_Next_Generation)

It struck me at the time that in this enlightened future world of Star Trek, TNG, the government would never declare an android such as Data, “property.” So far as we know, biology aside, the only difference between Data and a human is Data’s inability to experience emotions. However, Data’s creator, Dr. Noonien Soong, programmed Data to be thoughtful, considerate and apparently with a will to serve mankind. Data chose his career path, served Starfleet, fought battles, saved lives and by the time we meet him, he is third in command of The Enterprise-D.

But real life frequently reminds us that the pressures of maintaining a military organization, or a corporation for that matter, can be dehumanizing. So now, twenty-five years after my initial skepticism of the premise, I can see just how easy it could be to justify potentially sacrificing Data to potentially create more of him. The officer trying to take possession of Data suggests that having an android like Data on every starship would be a really advantageous thing.

Many years earlier, Spock was fond of saying, “The needs of the many outweigh the needs of the few.” The question then boils down to who does the choosing of the needs of the few? Today, if you join the military, you have given them the authority to make that decision for you. You don’t get to pick the wars you fight or the people you kill.

In that sense, Data was being ordered into battle, which as a military officer is something he cannot refuse. Accordingly, Data resigns his military post, which Starfleet insists he cannot do any more than the ship’s computer can resign. Now we come to the real dilemma.

During the subsequent court trial to determine Data’s status, Captain Picard does a pretty good job proving that Data is a sentient being.

But finally, Starfleet Judge Advocate General, Captain Philippa Louvois, asks, “Does Data have a soul?” This was before the idea of being connected to a “universal mind” had made its way into popular culture via “The Secret,” Wayne Dyer, Oprah, Eckhart, et al. Captain Louvois states that she doesn’t know if she even has a soul herself. But if such a thing as a soul exists, Data has the potential to have one and should be allowed to figure that out for himself.

That takes us to the year 2014. In my novel, “Disappearing Spell,” I had to figure out if George Spell had a soul. George Spell is a clone of Alex Detail, created by the government to serve as a backup in case anything happens to Alex, who has already lost the genius of his own youth. George Spell appears to be about seven years old, but has been artificially gown over the course of two years and programmed to retain all of Alex Detail’s childhood intelligence, but without his fears of death that make him sometimes unwilling to help the war effort. George will do anything to protect humanity. This includes killing Alex and his biological mother, Zarena. Alarming, I know.

The story of “Disappearing Spell” is an expansion of events that occurred in “Alex Detail’s Rebellion.” Zarena has George kidnapped so she can send him off to die in a decisive battle, though meanwhile she can’t help but wonder if her clone-son might have the potential to have a soul. So far, George’s lack of empathy for “the needs of the few” seem to indicate he is nothing more than a weapon, with all the self-determination and compassion of a Tomahawk Missile.

While Zarena does her best mothering in the short time she and George have together–reading him fairy tales and telling him killing is wrong–she seems to be making little progress. However, George has become friendly with Zarena’s pet cat. When the cat is taken to be put-to-sleep, something happens to George. Somehow, empathy emerges. George goes on to risk his life to save the animal. He overrides all the programming that tells him how important he is to the fate of humanity because he just can’t help himself.

It occurs to me that during Data’s trial in the episode of Start Trek TNG, there was no talk of empathy. It was understood that even though Data didn’t have emotions, his actions always demonstrated empathy. And in later seasons of TNG, Data gets a pet cat, Spot, which he cares for tenderly.

Ultimately, the “values” of life, being a human, having a soul, being a person who adds more than they take—can be readily seen in how we consider other life. Be it a cat, grocery clerk, employee or even an enemy combatant—like the Borg Hugh. It’s the most abhorrent thing to think that any living thing might not have the potential to have a soul, even a machine that just acts like a person.

Why were the writers and producers of Star Trek TNG compelled to demonstrate how much humanity an android could possess? And why in a future episode did they take their most inhumane creation, “The Borg,” and demonstrate their capacity for empathy in the captured Borg Hugh? I don’t know, but I have a feeling it is not too dissimilar to why I could not allow George Spell to be anything less than humane. To see the potential for goodness in all things is human.

So, back to my original question: Do you have a soul? Who am I to tell you the answer to that when there are ancient writings and modern television shows devoted to exploring this potential.